AlphaBot: Lane tracking with camera: Unterschied zwischen den Versionen

| (13 dazwischenliegende Versionen desselben Benutzers werden nicht angezeigt) | |||

| Zeile 19: | Zeile 19: | ||

= Introduction = | = Introduction = | ||

This is an Alphabot by waveshare which supports advanced navigation and sensing features. In this project our task is to drive the Alpha bot with in the right lane along the course by using a pixy2 camera and modify its speed limit. We also added a [[GY-85 gyroscope IMU sensor]] to record the Yaw rate so that we can later calculate the yaw angle from the yaw rate data, | This is an Alphabot by waveshare which supports advanced navigation and sensing features. In this project our task is to drive the Alpha bot with in the right lane along the course by using a pixy2 camera and modify its speed limit. We also added a [[GY-85 gyroscope IMU sensor]] to record the Yaw rate so that we can later calculate the yaw angle from the yaw rate data, for getting better output results we replaced the Aplhabot's ardunio uno board with arduino r4 wifi, so that we can have get accurate results via web sever which we can monitor on matlab.These components works together so that Alpha bot can drive independently in real time. | ||

= Requirements = | = Requirements = | ||

| Zeile 52: | Zeile 52: | ||

= Planning = | = Planning = | ||

I planned the Aphabot in such a way that first I used Pixy2 camera for lane detection on Arduino, once it was successfully achieved I mounted Gyro-85 modul on the Alphabot to record the YawRate and YawAngle and again programmed this on Arduino.And everthing was working and I was geting all outputs on Arduino serial monitior. After that I added a bluetooth modul HC-05 to get my output wirelessly on Matlab. I am getting seperate output graphs for all the individual outputs. | I planned the Aphabot in such a way that first I used Pixy2 camera for lane detection on Arduino, once it was successfully achieved I mounted Gyro-85 modul on the Alphabot to record the YawRate and YawAngle and again programmed this on Arduino. And everthing was working and I was geting all outputs on Arduino serial monitior. After that I added a bluetooth modul HC-05 to get my output wirelessly on Matlab but unfortunately it was lagging so I replaced the Alphabot's arduino uno with arduino r4 wifi for getting more accurate output via webserver which later mointored on matlab.I am getting seperate output graphs for all the individual outputs and I have also added a counter for output results. | ||

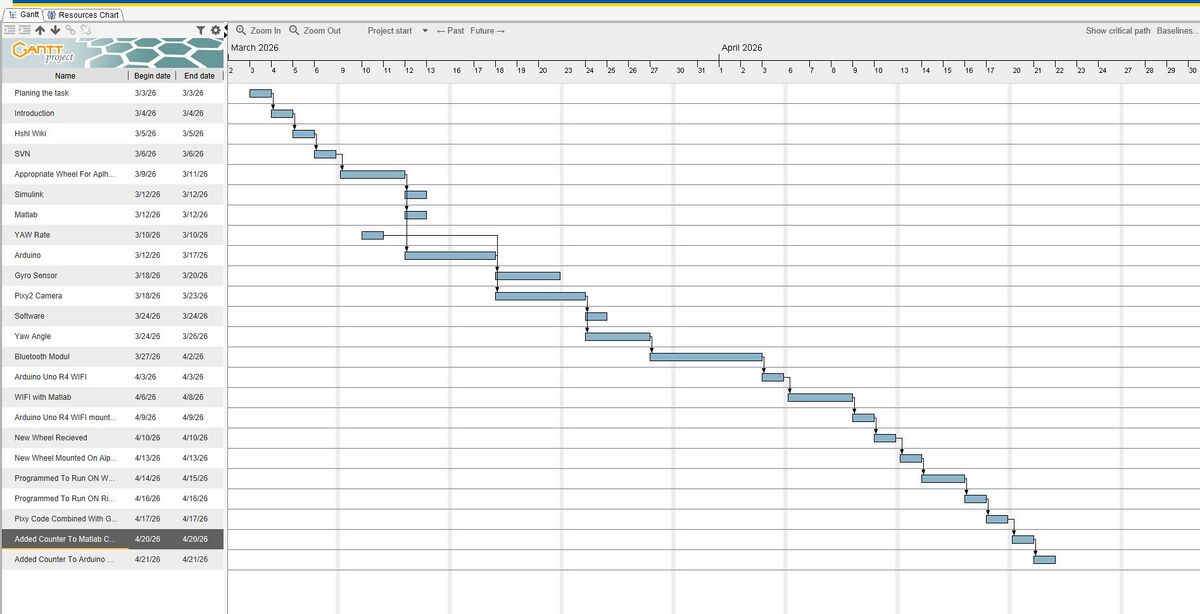

== AlphaBot Project Gantt Chart Draft == | == AlphaBot Project Gantt Chart Draft == | ||

| Zeile 62: | Zeile 62: | ||

GY-85 IMU sensor measures the yaw rate, which is used to detemine the AlphaBot's (yaw angle) according to its movements. | GY-85 IMU sensor measures the yaw rate, which is used to detemine the AlphaBot's (yaw angle) according to its movements. | ||

For more stable ouput I used arduino r4 wifi which sends output via websever which we can also monitor on matlab. | |||

All componennts work together in real time to make sure the AlphaBot runs independently in real time. | All componennts work together in real time to make sure the AlphaBot runs independently in real time. | ||

= Technical Overview = | = Technical Overview = | ||

| Zeile 70: | Zeile 73: | ||

Gy-85 IMU used to record yaw rate and yaw angle. | Gy-85 IMU used to record yaw rate and yaw angle. | ||

Arduino r4 wifi is used for collecting the output wirelessly in real time. | |||

=Pin Assignment= | =Pin Assignment= | ||

| Zeile 143: | Zeile 145: | ||

AlphaBot is coded in Ardunio C/C++. | AlphaBot is coded in Ardunio C/C++. | ||

For output reults are monitored on Matlab with help of graph plots | |||

== Arduino IDE == | == Arduino IDE == | ||

| Zeile 151: | Zeile 155: | ||

The loop function operates continuously to read the sensors, compute the yaw rate and yaw angle, and manage the motors. | The loop function operates continuously to read the sensors, compute the yaw rate and yaw angle, and manage the motors. | ||

The Serial Monitor allows for the viewing o data in real-time (line error, yaw rate, | The Serial Monitor allows for the viewing o data in real-time (line error, yaw rate, yaw angle). | ||

This data can also be monitored via | This data can also be monitored via webserver and on matlab. | ||

== Simulink / MATLAB== | == Simulink / MATLAB== | ||

The AlphaBot’s behavior can be modeled, simulated, and analyzed using Simulink, a visual programming environment based on MATLAB. | The AlphaBot’s behavior can be modeled, simulated, and analyzed using Simulink, a visual programming environment based on MATLAB. | ||

Matlab : It shows serial output data from Arduino ( | Matlab : It shows serial output data from Arduino r4 wifi such as (yaw rate, yaw angle ).MATLAB receives wireless data and plots withh added counter. | ||

= Measurement = | = Measurement = | ||

The measurement system records the robot’s navigation performance using onboard sensor. | The measurement system records the robot’s navigation performance using onboard sensor. | ||

The Pixy2 Camera for lane detection | The Pixy2 Camera for lane detection . | ||

The GY-85 IMU Sensor for measuring yaw rate and yaw angle. | The GY-85 IMU Sensor for measuring yaw rate and yaw angle. | ||

| Zeile 188: | Zeile 192: | ||

= Related Links = | = Related Links = | ||

Connecting Pixy to Arduino guide: | |||

https://docs.pixycam.com/wiki/doku.php?id=wiki:v2:hooking_up_pixy_to_a_microcontroller_-28like_an_arduino-29 | |||

Line tracking through PixyMon: | |||

https://docs.pixycam.com/wiki/doku.php?id=wiki:v2:line_quickstart | |||

Line tracking API: | |||

https://docs.pixycam.com/wiki/doku.php?id=wiki:v2:line_api | |||

= SVN-Repository = | = SVN-Repository = | ||

Aktuelle Version vom 23. April 2026, 11:47 Uhr

| Autor: | Syed Muhammad Abis Rizvi |

| Art: | Praxissemester |

| Studiengang: | ELE |

| Starttermin: | 02.03.2026 |

| Abgabetermin: | 21.06.2026 |

| Betreuer: | Prof. Dr.-Ing. Schneider |

| Sprache: | DE EN |

Introduction

This is an Alphabot by waveshare which supports advanced navigation and sensing features. In this project our task is to drive the Alpha bot with in the right lane along the course by using a pixy2 camera and modify its speed limit. We also added a GY-85 gyroscope IMU sensor to record the Yaw rate so that we can later calculate the yaw angle from the yaw rate data, for getting better output results we replaced the Aplhabot's ardunio uno board with arduino r4 wifi, so that we can have get accurate results via web sever which we can monitor on matlab.These components works together so that Alpha bot can drive independently in real time.

Requirements

| Req. | Description | Priority |

|---|---|---|

| 1 | An AMR must drive autonomously in the right-hand lane. | 1 |

| 2 | The Topcon Robotic Total Station is used as the reference measurement system. | 1 |

| 3 | The AMR must evaluate the road data via camera (Pixy 2.1) to follow the lane. | 2 |

| 4 | The reference values must be recorded with MATLAB (x, y, ). | 1 |

| 5 | Measurement errors must be appropriately filtered. | 1 |

| 6 | The two-dimensional digital map showing the robot's pose during movement must be provided as a MATLAB® file (.mat). | 1 |

| 7 | The solution path and solution must be documented in this wiki article. | 1 |

| 8 | An AlphaBot must be used as the AMR. | 1 |

| 9 | MATLAB®/Simulink must be used as the control software. | 1 |

| 10 | From the measured yaw rate the yaw angle must be determined and compared to the reference from Req. 4. | 1 |

| 11 | The AlphaBot's speed must be optimized to it's maximum. | 1 |

Planning

I planned the Aphabot in such a way that first I used Pixy2 camera for lane detection on Arduino, once it was successfully achieved I mounted Gyro-85 modul on the Alphabot to record the YawRate and YawAngle and again programmed this on Arduino. And everthing was working and I was geting all outputs on Arduino serial monitior. After that I added a bluetooth modul HC-05 to get my output wirelessly on Matlab but unfortunately it was lagging so I replaced the Alphabot's arduino uno with arduino r4 wifi for getting more accurate output via webserver which later mointored on matlab.I am getting seperate output graphs for all the individual outputs and I have also added a counter for output results.

AlphaBot Project Gantt Chart Draft

Working principle

The AlphaBot uses the Pixy" camera to detect the lane which helps Alphabot to poistion itself on the track and based on this infromation the motor runs and the Alphabot runs independently on the track and stop immediately when it does not detect any line.The horizontal x-coordinate value ranges between 0 and frameWidth (79) and the vertical y-coordinate value ranges between 0 and frameHeight (52).As they are created on a 79x52 occupancy grid and greater resolutions are not possible due to memory limitations, the line/vector coordinates have a lower resolution so that is why (39) is the estimated frame centerof Pixy camera.

GY-85 IMU sensor measures the yaw rate, which is used to detemine the AlphaBot's (yaw angle) according to its movements.

For more stable ouput I used arduino r4 wifi which sends output via websever which we can also monitor on matlab.

All componennts work together in real time to make sure the AlphaBot runs independently in real time.

Technical Overview

The system is built on the waveshare AphaBot which is integrated with motors. A pixy2 camera is used for keeping the AlphaBot on track.

Gy-85 IMU used to record yaw rate and yaw angle.

Arduino r4 wifi is used for collecting the output wirelessly in real time.

Pin Assignment

| Component | Module Pin | Connected Controller Pin |

|---|---|---|

| Pixy2 Camera (I2C) | SDA | A4 |

| SCL | A5 | |

| VCC | 5V | |

| GND | GND | |

| GY-85 IMU Sensor (I2C) | SDA | A4 (shared I2C) |

| SCL | A5 (shared I2C) | |

| VCC | 3.3V / 5V | |

| GND | GND | |

| Bluetooth / Serial | TX | RX (D0) |

| RX | TX (D1) | |

| VCC | 5V / 3.3V | |

| GND | GND | |

| Left Motor | PWMA | D6 (PWM) |

| AIN1 | A1 | |

| AIN2 | A0 | |

| Right Motor | PWMB | D5 (PWM) |

| BIN1 | A2 | |

| BIN2 | A3 | |

| Power Supply | Battery / VCC | AlphaBot power input; sensors powered via onboard voltage regulator |

Measurement method

Measurement is done by using onboard sensors on AlphaBot. Pixy2 camera detects the lane position from which lateral error is calculated. The GY-85 IMU sensor measures the yaw rate which helps determine the yaw angle.

Formula for yaw angle.

\[ \theta(t) = \theta_0 + \int_{0}^{t} \omega(t)\, dt \]

\[ \theta_k = \theta_{k-1} + \omega_k \cdot \Delta t \]

Measuring Circuit

The measuring circuit consists of the AlphaBot and the GY-85 IMU Sensor for recording yaw rate and yaw angle.

Software

PixyMonV2 is used to teach Pixy2 camera to specifiy lane tracking and enhance its vision settings using color-based filtering.

AlphaBot is coded in Ardunio C/C++.

For output reults are monitored on Matlab with help of graph plots

Arduino IDE

The AlphaBot's software is created with the Arduino IDE, which offers a unified environment for writing, compiling, and uploading code to the microcontroller.

The program utilizes Arduino C/C++ and incorporates standard libraries like Wire.h for I2C communication and Pixy2I2C.h for the Pixy2 camera. The loop function operates continuously to read the sensors, compute the yaw rate and yaw angle, and manage the motors.

The Serial Monitor allows for the viewing o data in real-time (line error, yaw rate, yaw angle). This data can also be monitored via webserver and on matlab.

Simulink / MATLAB

The AlphaBot’s behavior can be modeled, simulated, and analyzed using Simulink, a visual programming environment based on MATLAB.

Matlab : It shows serial output data from Arduino r4 wifi such as (yaw rate, yaw angle ).MATLAB receives wireless data and plots withh added counter.

Measurement

The measurement system records the robot’s navigation performance using onboard sensor.

The Pixy2 Camera for lane detection .

The GY-85 IMU Sensor for measuring yaw rate and yaw angle.

Video

Datasheet

| Component | Model / Type | Key Specifications | Function in Project |

|---|---|---|---|

| AlphaBot | Waveshare AlphaBot | 2 DC motors, motor driver, chassis, 6–12V power input | Mobile platform for autonomous navigation |

| Pixy2 Camera | Pixy2 CMUcam5 | 60 fps

I2C/SPI/UART interface Line-tracking mode |

Lane detection and position measurement |

| GY-85 IMU | GY-85 (HMC5883L + MPU6050) | 3-axis gyroscope, accelerometer, magnetometer

I2C interface |

Measures yaw rate, heading, and motion data |

| Bluetooth Module | HC-05 / HC-06 | UART interface, 3.3–5V operation, 10 m range | Wireless telemetry of sensor and motor data |

Related Links

Connecting Pixy to Arduino guide: https://docs.pixycam.com/wiki/doku.php?id=wiki:v2:hooking_up_pixy_to_a_microcontroller_-28like_an_arduino-29

Line tracking through PixyMon: https://docs.pixycam.com/wiki/doku.php?id=wiki:v2:line_quickstart

Line tracking API: https://docs.pixycam.com/wiki/doku.php?id=wiki:v2:line_api

SVN-Repository

https://svn.hshl.de/svn/HSHL_Projekte/trunk/AlphaBot

→ zurück zum Hauptartikel: Aufbau und Test eines Autonomen Fahrzeugs